Acoustic Perception

This project explored how natural, socially meaningful sounds can improve learning and engagement in cognitive training. Using a fully autonomous touchscreen system designed for common marmosets, we showed that ecologically valid acoustic cues such as conspecific calls lead to faster learning than artificial tones.

The system (MXBI) operates directly in the animals’ home enclosures, where they interact voluntarily with sound-based tasks identified via RFID. By adapting task difficulty in real time and removing the need for social isolation or food deprivation, it transforms cognitive testing into an enrichment activity that aligns with the animals’ natural behaviors and motivations.

The study demonstrates that aligning experimental design with ecological relevance is not only better for data quality, but also for animal welfare a principle that now guides much of my work at the interface between neuroscience, technology, and ethics.

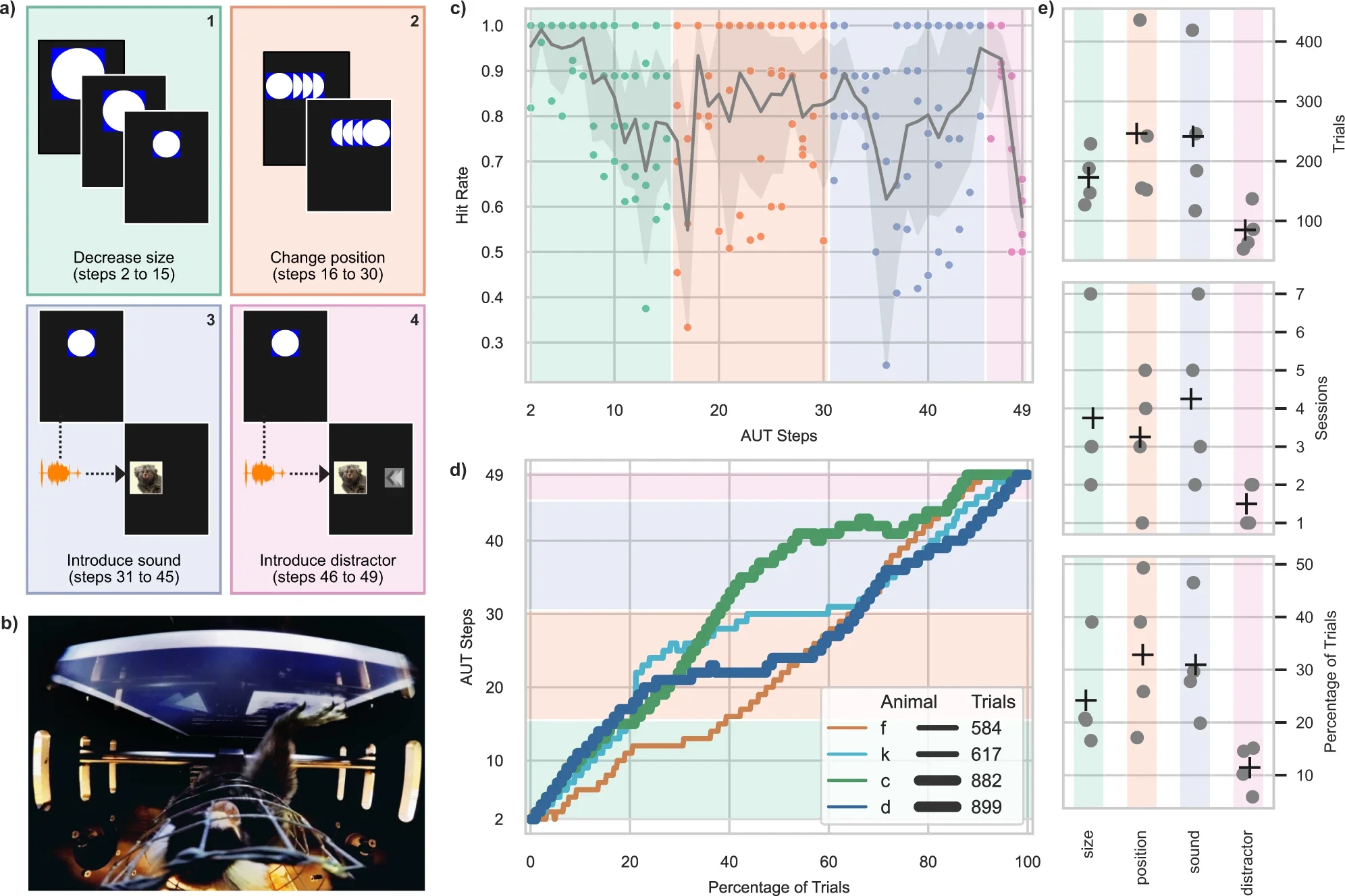

This figure demonstrates that marmosets can autonomously learn and perform sound-based tasks when the acoustic environment and interface are designed to match their natural perception. Over repeated sessions, the animals consistently improved their ability to associate specific acoustic cues with visual choices on the touchscreen, performing hundreds of trials per day without supervision.

The data reveal that auditory cognition can be trained and measured in a fully self-directed, home-cage setting, provided the sounds are engaging and ecologically meaningful. Rather than reacting to artificial tones, the marmosets actively explored and responded to biologically relevant vocalizations, showing both curiosity and sustained motivation.

In short, the figure captures a core message of the study: that sound—when used naturally and contextually—can drive voluntary participation and learning, bridging scientific precision with animal welfare.

As first author, I developed both the hardware and software architecture, the adaptive algorithms, and the behavioral validation framework. This project exemplifies how thoughtful system design can make scientific research more humane, scalable, and translational, bridging the gap between animal cognition and human-centered acoustic technologies.

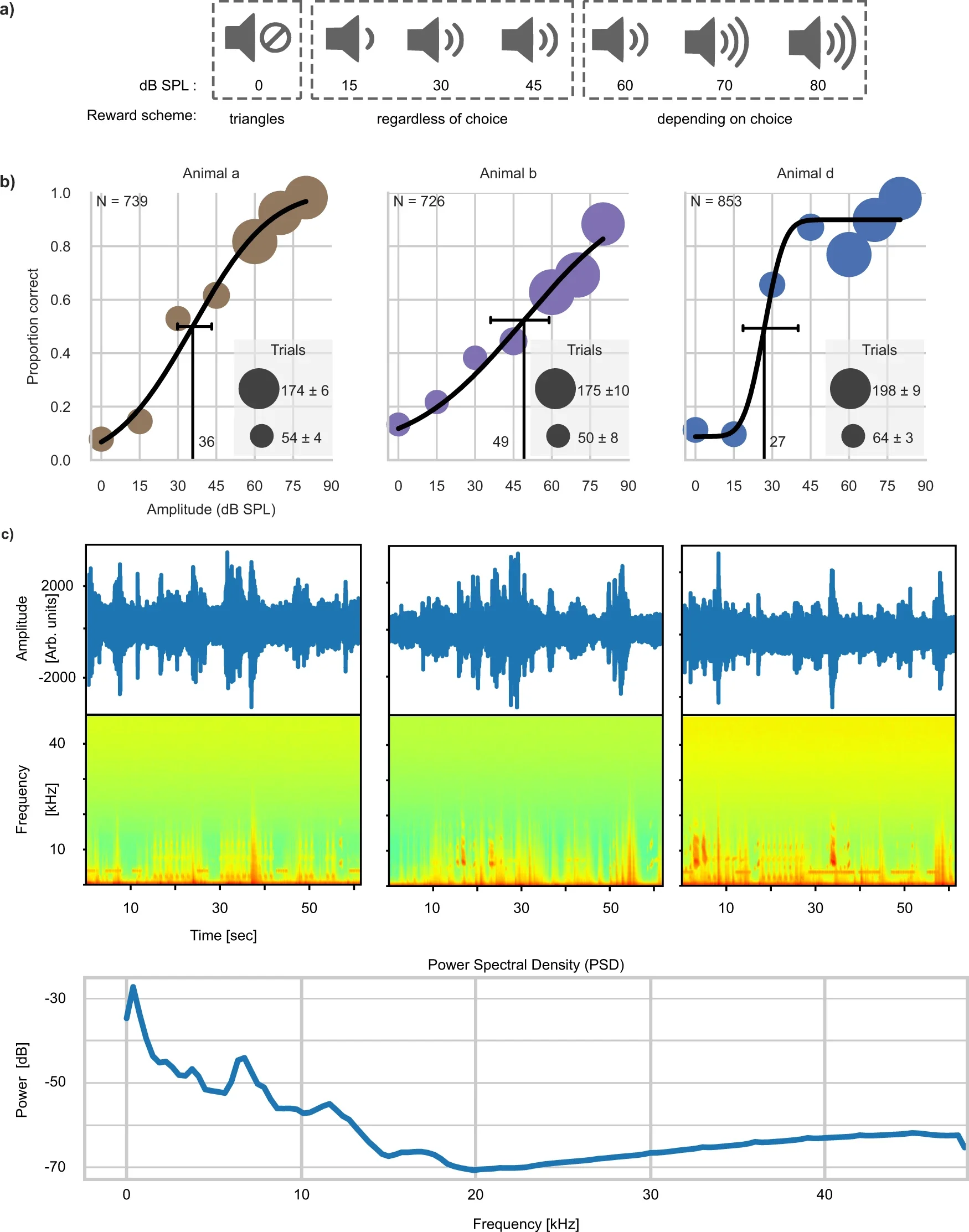

Together, these findings demonstrate that accurate, welfare-friendly hearing assessments can be performed fully automatically inside social enclosures, without removing animals from their environment, while animals play game-like activities preferably with ecologically relevant sounds.

Moreover, marmosets’ hearing thresholds were estimated automatically within the XBI system.

(a) shows the reward scheme used across sound intensities. When a vocalization was played at very low volume (0 dB SPL), only one visual choice (triangles) was rewarded. At mid-levels (15–45 dB SPL), animals were rewarded regardless of choice to keep them engaged. At higher intensities (≥ 60 dB SPL), the reward depended on making the correct selection.

(b) plots the psychometric curves for three animals, showing how accurately they detected the vocalization at each sound level. The vertical lines mark their estimated hearing thresholds (36 dB, 49 dB, and 27 dB SPL respectively), with horizontal bars showing 95 % confidence intervals.

(c) presents sound waveforms and spectrograms recorded inside the marmoset housing during testing. The power spectral density reveals consistent background peaks around 6, 12, and 18 kHz—frequencies typical of marmoset vocalizations.

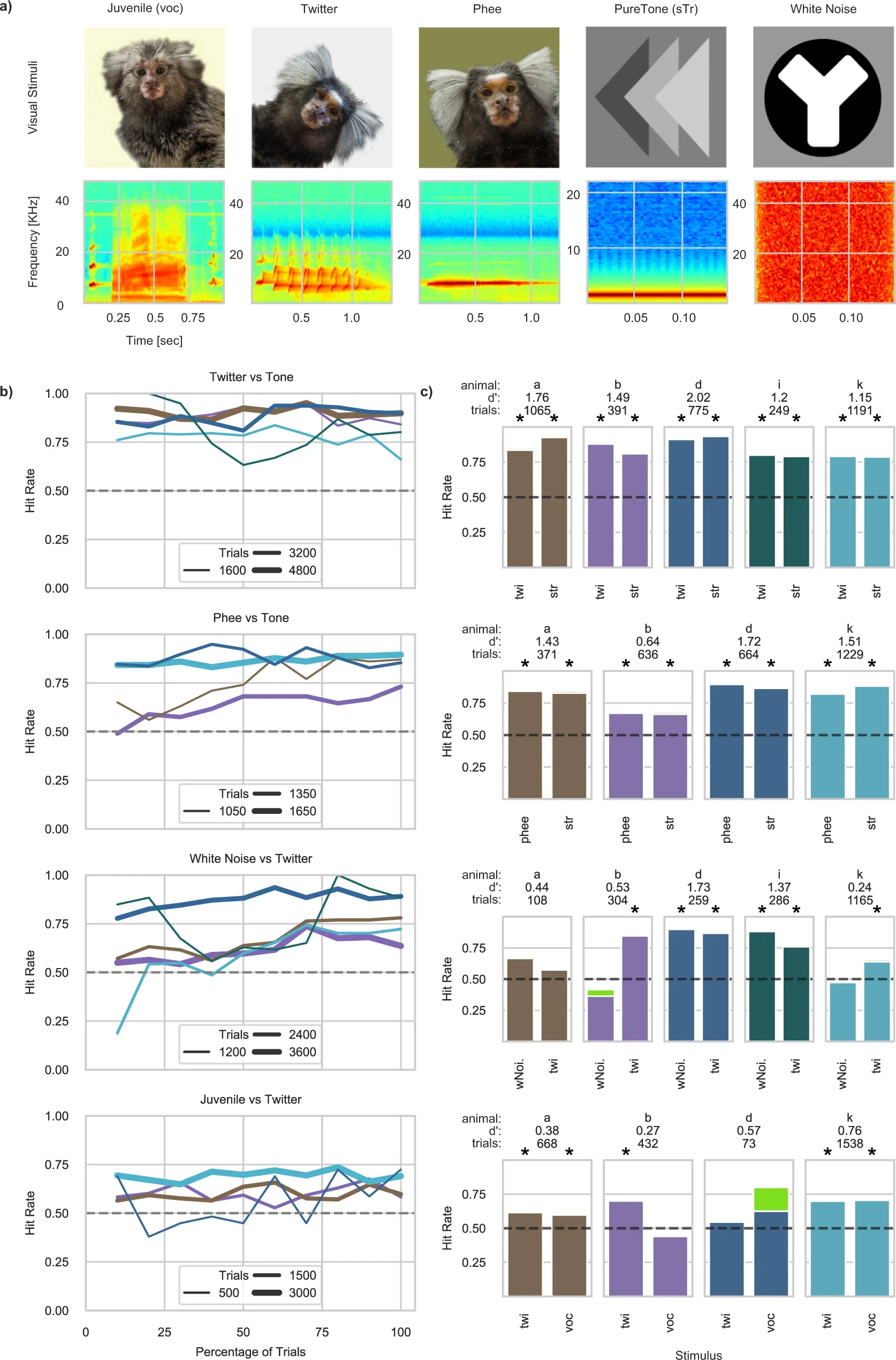

This figure illustrates how marmosets learned to discriminate between different sound types using the autonomous training system.

(a) shows the five acoustic categories used: natural marmoset calls (“Juvenile,” “Twitter,” “Phee”) alongside synthetic tones and white noise, each paired with a visual cue and spectrogram.

(b) plots learning curves (Hit Rate across trials) for several sound comparisons, showing that performance improved faster and reached higher accuracy when animals heard natural vocalizations rather than artificial sounds.

(c) summarizes individual performance (Hit Rates and sensitivity values) across animals and sound types, confirming that ecologically valid sounds supported better discrimination and engagement than artificial stimuli.

In short, this figure demonstrates that biologically meaningful, natural sounds enhance learning and motivation in automated auditory tasks.